Two years ago, I wrote a pair of posts (3/29/24 and 4/8/24) about linked attachments—what Microsoft calls “Cloud Attachments”—arguing that producing parties had been getting away with murder by not collecting and searching them. The argument was straightforward: a linked attachment is no less relevant than an embedded one, the tools to collect them exist, and the claimed burdens were overstated. Genuine, but exaggerated.

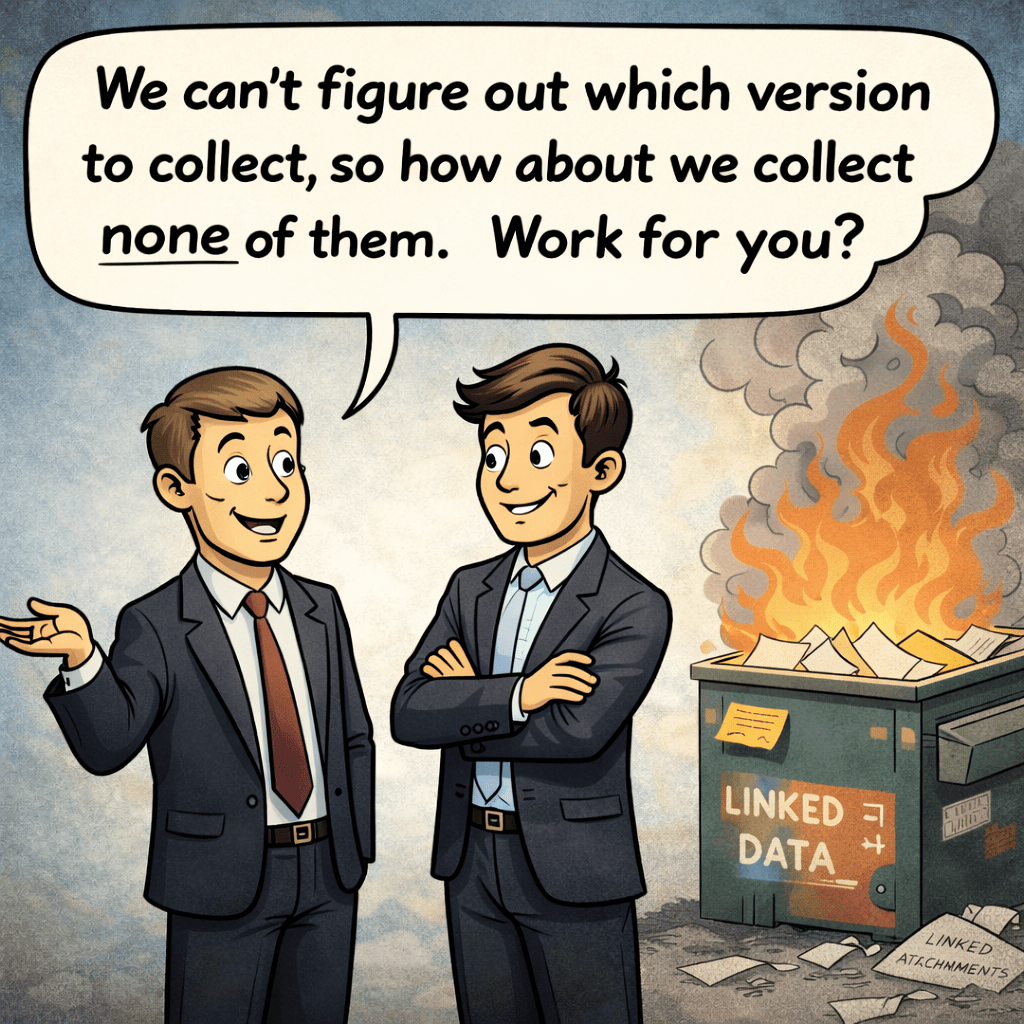

Nothing that’s happened since has changed that core proposition. If anything, developments in case law, the Sedona Conference’s 2025 Commentary on collaboration platform discovery, and the emergence of proposed technical standards have reinforced it. But those same developments carry a risk I want to flag: that the versioning question—which version of a linked attachment is the “right” one—is being elevated in ways that could hand producing parties a shiny new excuse for doing nothing.

What’s Changed in a Year

The landscape has shifted since, and largely in the right direction.

Courts are beginning to tiptoe towards what tools can actually do rather than accepting blanket claims of infeasibility. The Carvana securities litigation is perhaps the most striking example: the court ordered a bounded forensic capability test using a specific tool, then expanded it when the initial pilot supported further testing. That’s a different approach than we’ve seen before—a court saying, in effect, “show me what you can recover, don’t just tell me you can’t.”

The Sedona Conference published its Commentary on Discovery of Collaboration Platforms Data in 2025, acknowledging the distinct preservation, collection, and production challenges these platforms present. When Sedona identifies a problem, that identification becomes part of the baseline against which “reasonable steps” under Rule 37(e) will be measured. Parties who were aware of these challenges—and by now, every competent e-discovery practitioner should be—will find it increasingly hard to argue that their traditional, email-era workflow was good enough.

And a proposed technical standard—the Reconstruction-Grade eDiscovery Standard, authored by Peter Kozak and Brandon D’Agostino—has articulated an architectural framework for what preservation of collaborative evidence should look like. It’s ambitious and thoughtful. I want to engage with it constructively, because I think it gets several things right. But I also want to sound a caution about how standards like this could be deployed in the real world of discovery disputes.

Two Problems

The RG standard does something valuable: it names and taxonomizes the specific ways that traditional preservation fails when evidence is collaborative, hyperlinked, and versioned. Its framework identifies what it calls the “Preservation Gap” (the referenced content is never preserved at all) and the “Context Gap” (the content is preserved but not in the state it existed at the relevant time). That’s a useful distinction.

But here’s where I part company—not with the standard’s laudable intent, but with the risk of how it may play out in the field.

The standard treats deterministic version resolution—preserving the as-sent version of a linked document, the version that existed when the message was transmitted—as a core conformance requirement. Architecturally, I understand why. If you’re building a system that aspires to reconstruction-grade fidelity, you want to capture the version the recipient would have seen when they clicked the link. That’s the gold standard.

The problem is that the gold standard can become the enemy of any standard at all.

To my eye, the versioning concern has been weaponized. It goes like this: a requesting party asks for linked attachments. The producing party raises the specter of versioning—“Which version do you want? The as-sent version? The as-accessed version? The current version? We can’t be sure which is the ‘right’ one, so the whole exercise is fraught with uncertainty.” And that uncertainty becomes the justification for producing no version. Not the wrong version. No version.

That’s the tail wagging the dog.

The “Dog” Is Collection

The threshold obligation is to collect and search linked attachments. Full stop. A link in an email reveals nothing about the content of the linked document. If you don’t collect the document, you can’t search it. If you can’t search it, you can’t assess it for relevance. And if you can’t assess it for relevance, you’re making a unilateral decision to exclude potentially responsive evidence—evidence that, but for a shift in how email systems handle large files, would have been embedded in the message and collected automatically.

That obligation exists independently of any versioning question. It existed before anyone coined the term “reconstruction-grade.” It existed when I wrote about it a year ago, and it existed for years before that. “Perfect” is not the standard in e-discovery, but neither is “lousy.”

Beware, too, the half-measure. A producing party, pressed on missing linked attachments, may offer to search the email text first and seek out the linked attachment only if the parent email hits on a keyword. This sounds reasonable until you think about how email actually works. It is exceedingly common for a transmitting email to say nothing more than “Please see attached” or “Here’s the draft we discussed,” while the attachment contains all the substantive content. If the email text doesn’t trigger a keyword, the attachment—however rich in relevant material—never gets collected or searched. And even if produced as a loose document, won’t tie to its “parent” transmitting message

When we search email families containing embedded attachments, we treat the family as responsive if either the message or the attachment generates a hit. Any workflow that conditions collection of linked attachments on hits in the transmitting email inverts that logic and guarantees that a large share of responsive evidence will be missed.

A producing party that collects and searches the current version of a linked attachment has done something meaningful. They’ve brought the document into the review population. They’ve assessed its content against the issues in the case. They’ve preserved the family relationship between message and attachment. They may not have captured the precise version that existed at send time, but they’ve captured a version—one that, in the overwhelming majority of cases, is likely to be the same or substantially similar to the transmitted version.

A producing party that collects nothing because of versioning uncertainty has done nothing. Lousy.

The “Tail” Is Versioning

I don’t dismiss the versioning issue. It’s real, and the RG standard is right to address it. There are cases where the difference between the as-sent version and the current version matters enormously—a contract with terms that changed, a financial model with revised projections, a compliance policy that was softened after the relevant communication. In those cases, producing the wrong version could mislead or, worse, could conceal what the actors actually relied upon.

But how often does this actually happen?

A year ago, I called for objective analysis: what percentage of cloud attachments are actually modified after transmittal? I’m repeating the call, louder, because the industry still hasn’t answered it.

I have a strong intuition—and I want to be candid that it’s an intuition based on experience, not evidence—that the incidence of post-transmittal modification is modest overall. My suspicion is that fewer than ten- to twenty percent of linked attachments are meaningfully modified after being shared, and perhaps far fewer than that. Most cloud attachments are final or near-final documents shared for information, not living collaborative drafts. Someone emails a report, a slide deck, a signed contract. The link is a delivery mechanism, not an invitation to co-author.

But I also suspect the percentage varies widely depending upon the culture. An organization whose culture runs to emailing finished work product will have a very different modification profile than one where teams routinely share early drafts via links for iterative editing in SharePoint. A law firm circulating closing documents will look different from a product team sharing design specs that change daily. The incidence of versioning concerns is likely a function of organizational work style, not some universal constant.

Here’s the point: I don’t have solid metrics. I believe what I’m describing here, but belief is not evidence, and I would readily yield my suspicion to meaningful measurement. The data needed to resolve this question is not exotic. Any organization with a reasonably mature M365 environment could sample and compare the version history of linked attachments against the timestamps of the messages that transmitted them. The analysis would tell us, for a given corpus, what percentage of linked attachments were modified after the transmitting message was sent, how significantly they were modified, and how soon after transmittal the modifications occurred. That’s a study someone should do—a vendor, a consultant, an academic, a standards body. It would replace speculation with evidence and give courts and practitioners a rational basis for calibrating the proportionality of versioning remediation. Too, litigants coming to Court seeking relief from the duty to collect linked attachments should collect the metrics to measure the claimed risk and burden.

Until we have that data, we’re arguing about a problem whose magnitude we don’t grasp, while ignoring a problem whose magnitude is obvious: linked attachments aren’t being collected as they should be.

Don’t Throw Out the Baby

I want to be clear about what I’m not saying. I’m not saying the RG standard is wrong to aspire to as-sent version resolution. I’m not saying versioning doesn’t matter. And I’m not attributing to the standard’s authors any intent to create a new excuse for non-production. Reading the standard carefully, its concept of graduated conformance levels and its emphasis on proportionality suggest the opposite intent.

But standards exist in an adversarial ecosystem. A standard that defines three conformance levels—RG-Core, RG-Plus, RG-Max—can be turned into a shield by a party arguing: “Your Honor, we can’t achieve even RG-Core conformance, so we shouldn’t be required to attempt collection of linked attachments.” That argument confuses the standard’s aspirational architecture with the floor of a party’s discovery obligations.

The floor is not reconstruction-grade fidelity. The floor is reasonable steps under Rule 37(e) and the obligation to search and produce relevant, responsive, non-privileged material. That floor requires, at minimum, that you collect linked attachments using the tools your platform provides, search them, and produce responsive documents—even if you’re producing the current version rather than the as-sent version.

To put it another way: producing the “wrong” version of a responsive document is a problem. Producing no version of a responsive document is a bigger problem.

I’ve been accused of leaning toward the interests of plaintiffs on this topic. That’s neither fair nor accurate. I advocate for evidence. I’m committed to getting to the evidence that resolves disputes in what Rule 1 of the Federal Rules calls a “just, speedy, and inexpensive” fashion. Not perfect. Certainly not at any cost. But I won’t accommodate high-handed, evasive approaches to the duty to produce responsive, non-privileged evidence—and dressing up a refusal to collect linked attachments in the language of versioning complexity is exactly that.

What the Standard Gets Right

Credit where it’s due. Several elements of the RG framework strike me as genuinely constructive:

Exception transparency. The standard requires structured records of what couldn’t be collected and why. In the current landscape, failures are silent. A linked attachment that can’t be retrieved simply disappears—no record that it was attempted, no record that it failed, no record of why. Requiring a producing party to document its failures is a significant improvement over the status quo, where the absence of evidence is invisible. Notably, courts have already begun requiring this kind of transparency on an ad hoc basis. In the Uber litigation, Judge Cisneros ordered two custom metadata fields—“Missing Google Drive Attachments” and “Non-Contemporaneous”—to flag gaps and version discrepancies in the production. What the RG standard proposes as a systemic architectural requirement, courts are already imposing case by case. Formalizing that expectation is a natural and constructive next step.

The Preservation Gap vs. Context Gap distinction. Naming these as separate failure modes is useful because they have different legal implications. The Preservation Gap—evidence that was never preserved at all—maps cleanly to Rule 37(e). The Context Gap—evidence preserved in the wrong state—is doctrinally murkier. Courts don’t yet have a clean framework for “you preserved it, but what you preserved isn’t what was communicated.” Distinguishing the two helps practitioners and courts think more precisely about what went wrong and what remedies are appropriate.

Capability testing as an emerging judicial norm. The companion post to the standard highlights Carvana and the broader trajectory of courts ordering parties to demonstrate what their tools can do. This is a welcome and overdue development. The e-discovery conversation around linked attachments has too often been dominated by conclusory assertions of infeasibility. Capability testing replaces assertion with demonstration, and that benefits everyone—including producing parties who have invested in the right tools and want credit for doing so.

Where We Go from Here

The path forward requires distinguishing between the immediate obligation and the aspirational architecture.

The immediate obligation is collection. If you’re on Microsoft 365, use Purview. If you’re on Google Workspace, use Vault. These tools aren’t perfect, but they exist, and they collect linked attachments. The version you collect may be the current version rather than the as-sent version. That’s a known limitation, not a reason to collect nothing.

The aspirational architecture is reconstruction-grade fidelity—as-sent version resolution, deterministic exception handling, reproducible exports. That’s where the industry needs to go. Tools like Forensic Email Collector are already demonstrating that historical version recovery is technically possible in many cases. The Carvana court’s willingness to order capability testing suggests that judges are ready to push the envelope.

But the bridge between those two isn’t “wait until perfect tools exist.” The bridge is “do what you can now, document what you can’t, and improve your capabilities over time.”

That’s what proportionality actually means. Not perfection. Not paralysis. But reasonable, good-faith efforts commensurate with the stakes and the state of the art.

The versioning problem will resolve because courts will order testing, because tools will improve, because someone will finally produce the empirical data on post-transmittal modification rates (pretty please), and because standards like the RG framework will mature. These are all good-faith efforts to move the law and the industry forward, and they well deserve recognition for that commendable effort.

In the meantime, the producing party’s obligation is clear: collect the linked attachments, search them, and produce what’s responsive.

The tail does not get to wag the dog.

Hat tip to Doug Austin for highlighting the publication of the Reconstruction-Grade eDiscovery Standard on his eDiscovery Today blog. Doug continues to be an indispensable resource for practitioners trying to keep pace with developments in this space.

© 2026 Craig D. Ball. All rights reserved.