Lawyers using AI keep turning up in the news for all the wrong reasons—usually because they filed a brief brimming with cases that don’t exist. The machines didn’t mean to lie. They just did what they’re built to do: write convincingly, not truthfully.

When you ask a large language model (LLM) for cases, it doesn’t search a trustworthy database. It invents one. The result looks fine until a human judge, an opponent or an intern with Westlaw access, checks. That’s when fantasy law meets federal fact.

We call these fictions “hallucinations,” which is a polite way of saying “making shit up;” and though lawyers are duty-bound to catch them before they reach the docket, some don’t. The combination of an approaching deadline and a confident-sounding computer is a dangerous mix.

Perhaps a Useful Guardrail

It struck me recently that the legal profession could borrow a page from the digital forensics world, where we maintain something called the NIST National Software Reference Library (NIST NSRL). The NSRL is a public database of hash values for known software files. When a forensic examiner analyzes a drive, the NSRL helps them skip over familiar system files—Windows dlls and friends—so they can focus on what’s unique or suspicious.

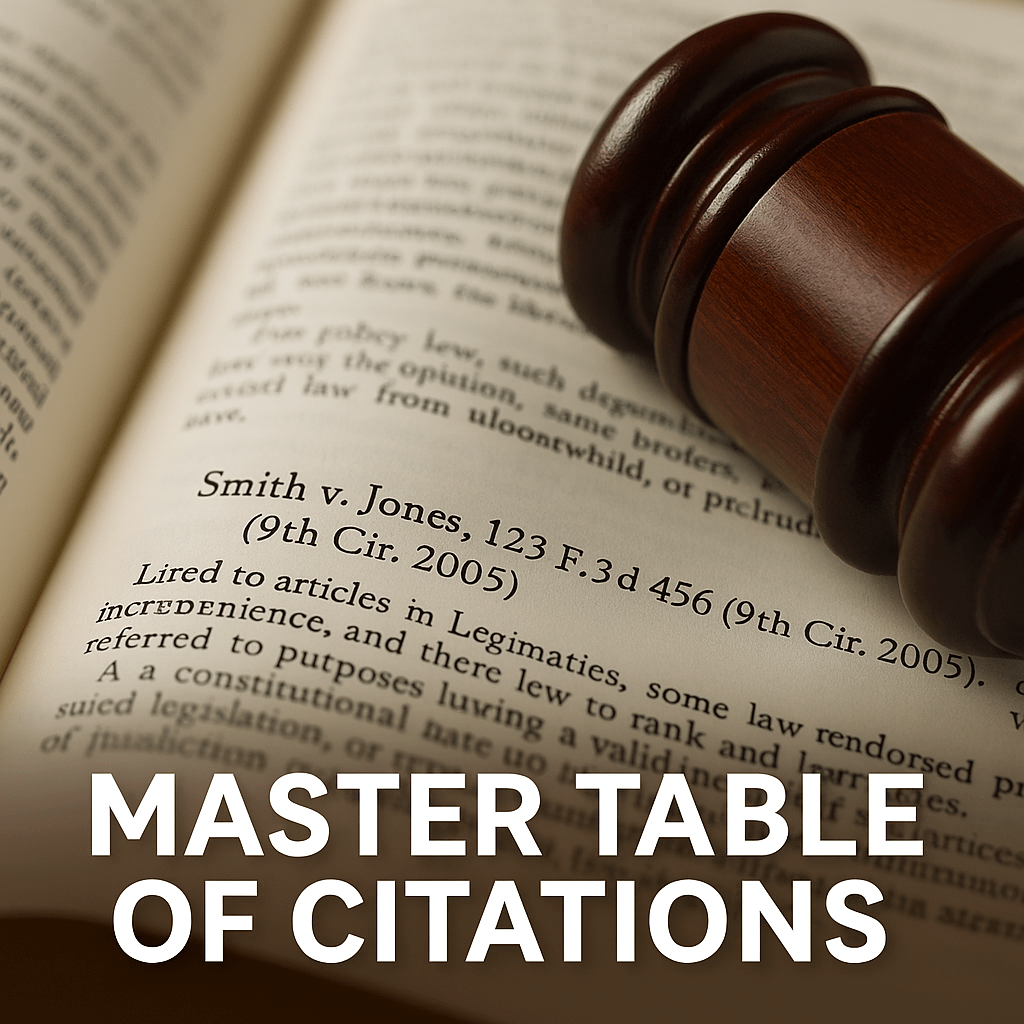

So here’s a thought: what if we had a master table of genuine case citations—a kind of NSRL for case citations?

Picture a big, continually updated, publicly accessible table listing every bona fide reported decision: the case name, reporter, volume, page, court, and year. When your LLM produces Smith v. Jones, 123 F.3d 456 (9th Cir. 2005), your drafting software checks that citation against the table.

If it’s there, fine—it’s probably references a genuine reported case.

If it’s not, flag it for immediate scrutiny.

Think of it as a checksum for truth. A simple way to catch the most common and indefensible kind of AI mischief before it becomes Exhibit A at a disciplinary hearing.

The Obstacles (and There Are Some)

Of course, every neat idea turns messy the moment you try to build it.

Coverage is the first challenge. There are millions of decisions, with new ones arriving daily. Some are published, some are “unpublished” but still precedential, and some live only in online databases. Even if we limited the scope to federal and state appellate courts, keeping the table comprehensive and current would be an unending job; but not an insurmountable obstacle.

Then there’s variation. Lawyers can’t agree on how to cite the same case twice. The same opinion might appear in multiple reporters, each with its own abbreviation. A master table would have to normalize all of that—an ambitious act of citation herding.

And parsing is no small matter. AI tools are notoriously careless about punctuation. A missing comma or swapped parenthesis can turn a real case into a false negative. Conversely, a hallucinated citation that happens to fit a valid pattern could fool the filter, which is why it’s not the sole filter.

Lastly, governance. Who would maintain the thing? Westlaw and Lexis maintain comprehensive citation data, but guard it like Fort Knox. Open projects such as the Caselaw Access Project and the Free Law Project’s CourtListener come close, but they’re not quite designed for this kind of validation task. To make it work, we’d need institutional commitment—perhaps from NIST, the Library of Congress, or a consortium of law libraries—to set standards and keep it alive.

Why Bother?

Because LLMs aren’t going away. Lawyers will keep using them, openly or in secret. The question isn’t whether we’ll use them—it’s how safely and responsibly we can do so.

A public master table of citations could serve as a quiet safeguard in every AI-assisted drafting environment. The AI could automatically check every citation against that canonical list. It wouldn’t guarantee correctness, but it would dramatically reduce the risk of citing fiction. Not coincidentally, it would have prevented most of the public excoriation of careless counsel we’ve seen.

Even a limited version—a federal table, or one covering each state’s highest court—would be progress. Universities, courts, and vendors could all contribute. Every small improvement to verifiability helps keep the profession credible in an era of AI slop, sloppiness and deep fakes.

No Magic Bullet, but a Sensible Shield

Let’s be clear: a master table won’t prevent all hallucinations. A model could still misstate what a case holds, or cite a genuine decision for the wrong proposition. But it would at least help keep the completely fabricated ones from slipping through unchecked.

In forensics, we accept imperfect tools because they narrow uncertainty. This could do the same for AI-drafted legal writing—a simple checksum for reality in a profession that can’t afford to lose touch with it.

If we can build databases to flag counterfeit currency and pirated software, surely we can build one to spot counterfeit law?

Until that day, let’s agree on one ironclad proposition: if you didn’t verify it, don’t file it.